Mississippi State University’s (MSU) Quality Enhancement Plan (QEP), Bulldog Experience, uses direct and indirect measures, qualitative and quantitative measures, and internal and external instruments to evaluate the success of the initiative.

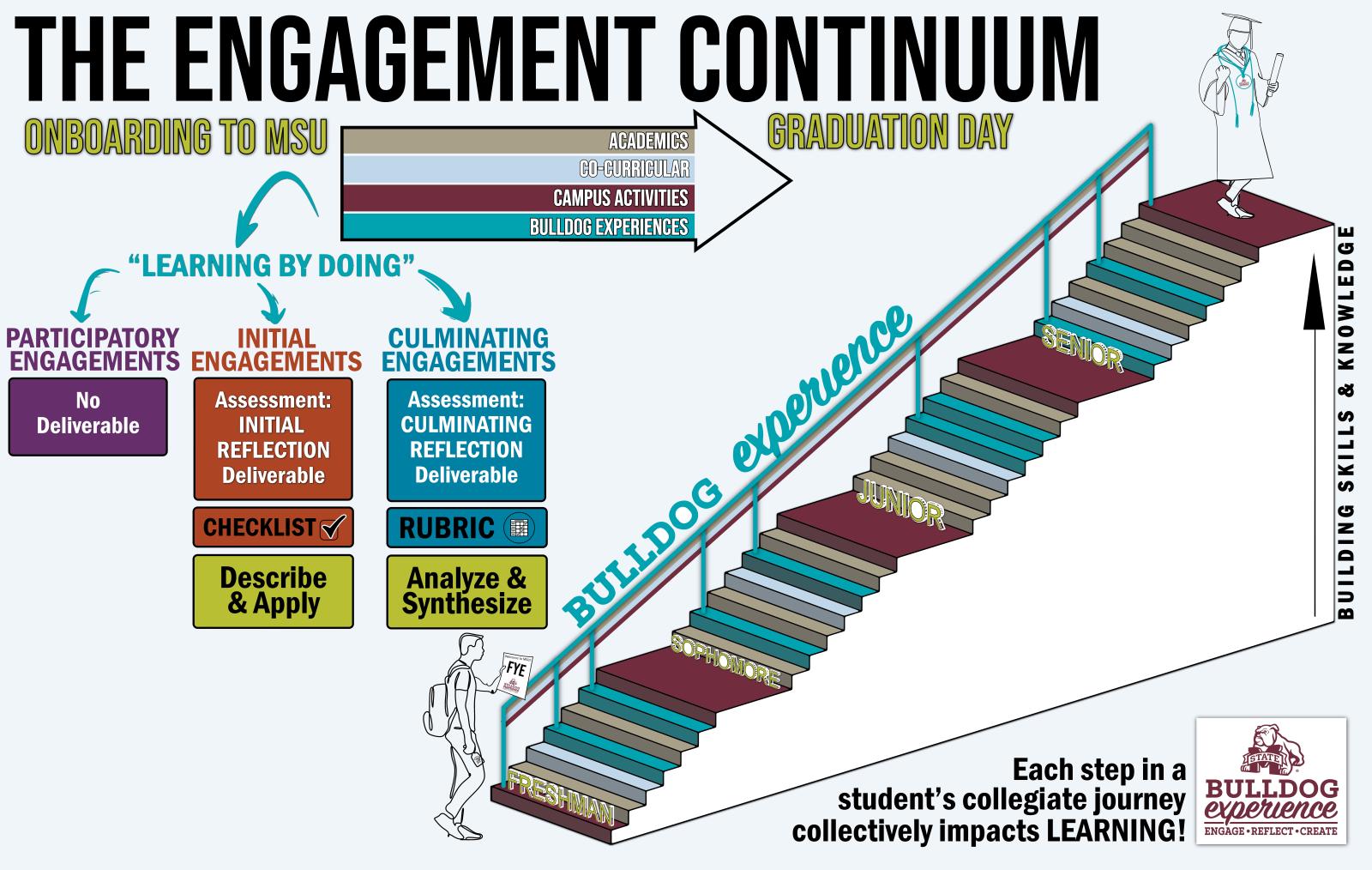

The assessment plan tracks student engagement in participatory, initial, and culminating engagements through a university-wide platform as well as a systematic correspondence with faculty and other partners.

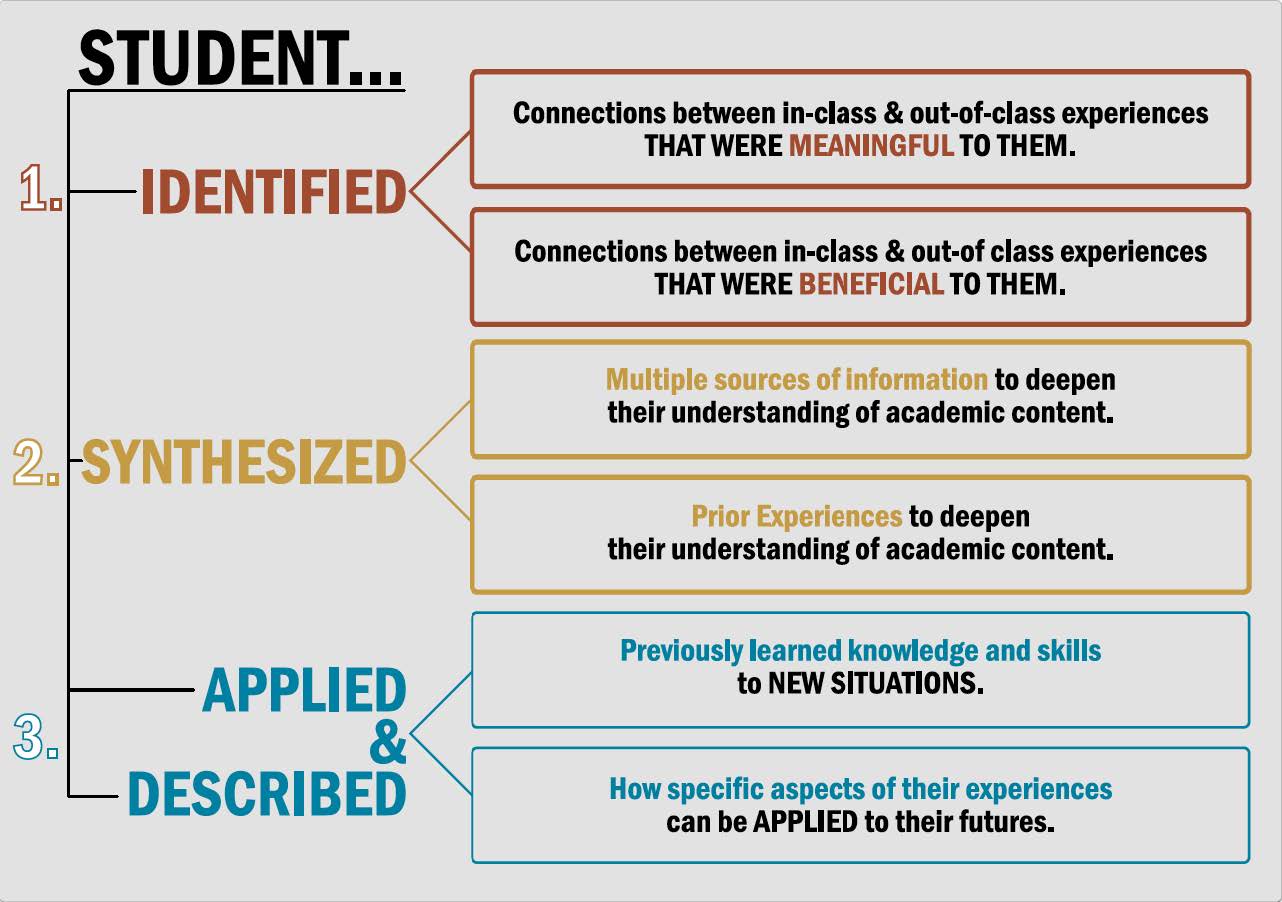

Student Learning Outcomes

As a result of Bulldog Experience, MSU students will be able to:

- Identify connections between in-class and out-of-class experiences that were meaningful and beneficial to them.

- Synthesize multiple sources of information, including prior experience, to deepen their understanding of academic content.

- Apply previously learned knowledge and skills to new situations or scenarios.

Reflection Prompts

-

Describe the experience by explaining “what, where, when, who, why and how”.

What was involved in the experience? Where did the experience take place? When did you complete the experience (use specific dates and time frames)? Who provided or sponsored the experience? Why did you complete the experience? How did you complete the experience?

-

What knowledge and skills previously acquired through in-class and out-of-class experiences did you use during the experience?

State specific knowledge and specific skills separately. For Bulldog Experience “in-class” means the Experiential Learning experience was a required part of a class but may have occurred outside of a classroom; for example, completing a required service project. For Bulldog Experience “out-of-class” means the experience was not part of a class; for example, attending and engaging in the International Fiesta.

-

Explain what you learned during the experience and how it aligns with your academic program of study.

Be sure to identify specific courses you have taken as part of your academic program of study (degree program) when aligning with what you learned during the experience. Be as specific as you can in stating what you learned. Make as many connections between what you learned about previous courses taken as part of your program of study (degree program).

-

In what ways was the experience meaningful and beneficial to you?

When describing how the experience was meaningful, explain how it was valuable to you, the organization responsible for providing the experience or how it had value for the greater good of society. When describing how the experience was beneficial, explain how it was purposeful or helpful to you in building new knowledge and skills.

-

What did you learn during the experience that will be helpful to you in your future?

Be as specific as you can and include as many different things that you learned as you can. Explain how each thing that you learned will be helpful to you in the future.

The Engagement Continuum

Baseline Data for MSU

Degree Program Offerings

The following data represents the required internships and capstone courses for all existing degree programs at Mississippi State University. Of the 84 degree programs offered, 62 degree programs (73.8% of all programs) require either an internship or a capstone course. Of these programs, 8 degree programs (9.5% of all programs) require both an internship and a capstone course while 22 degree programs (26.2% of programs) do not require either.

| Label | Value | Percentage of Programs |

| Internship or Capstone Required | 62 | 73.8% |

| Neither Internship nor Capstone Required | 22 | 26.2% |

| Internship and Capstone Required | 8 | 9.5% |

| Internship Required | 22 | 26.2% |

| Capstone Required | 48 | 57.1% |

| Total Programs | 84 | - |

When the results are aggregated by college, 4 of the colleges/units reported all degree programs require either an internship or a capstone course. The remaining colleges/units have varying levels of degree programs that require either an internship or a capstone course as is shown in the table below.

| Unit | Degree Programs Offered | Degree Programs Requiring Internship or Capstone | Program Percentage |

| Academic Affairs | 2 | 1 | 50.0% |

| Agriculture and Life Sciences | 17 | 14 | 82.4% |

| Architecture, Art, and Design | 4 | 3 | 75.0% |

| Arts & Sciences | 27 | 11 | 40.7% |

| Business | 8 | 8 | 100.0% |

| Education | 8 | 8 | 100.0% |

| Engineering | 13 | 12 | 92.3% |

| Forest Resources | 4 | 4 | 100.0% |

| Veterinary Medicine | 1 | 1 | 100.0% |

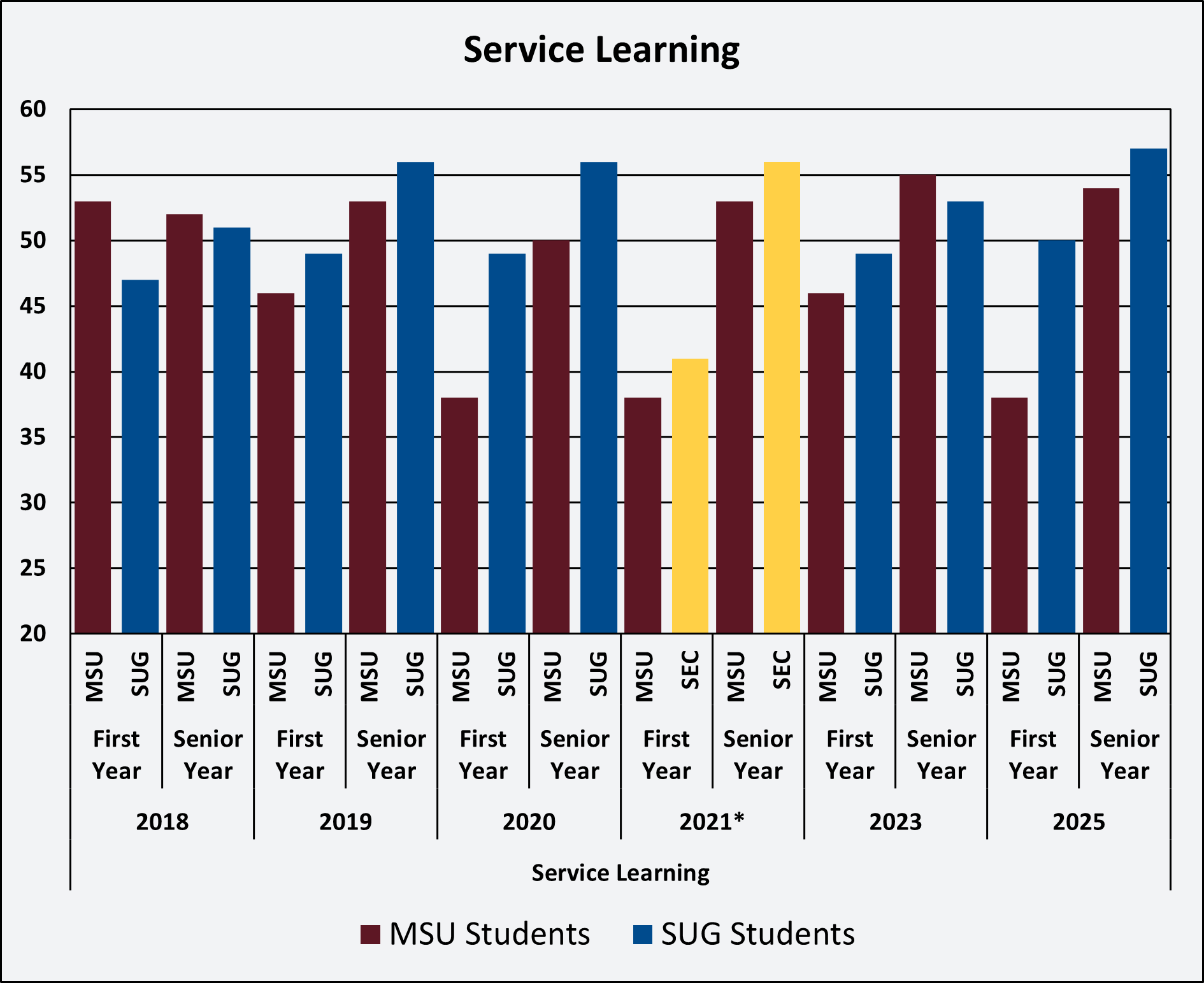

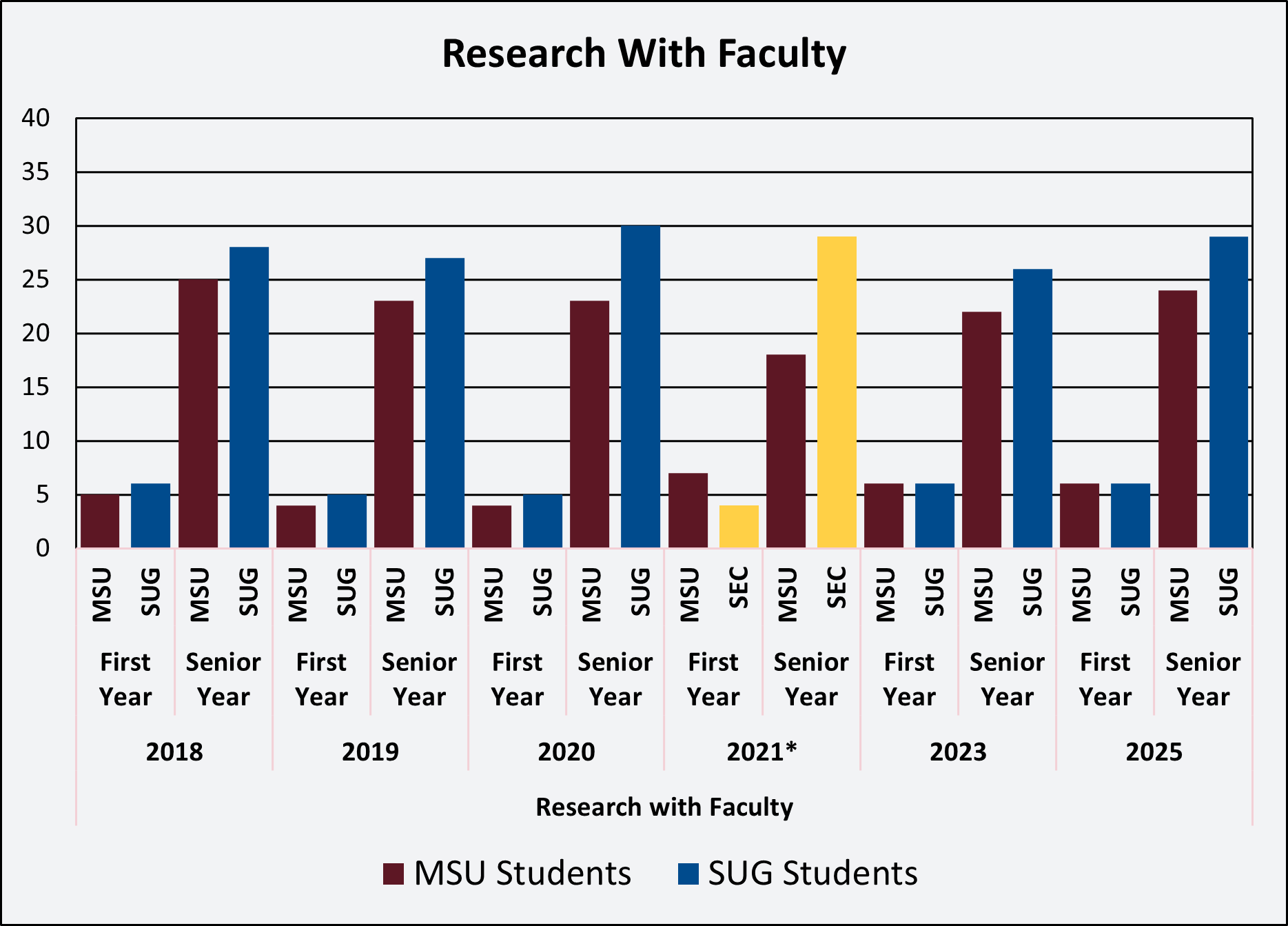

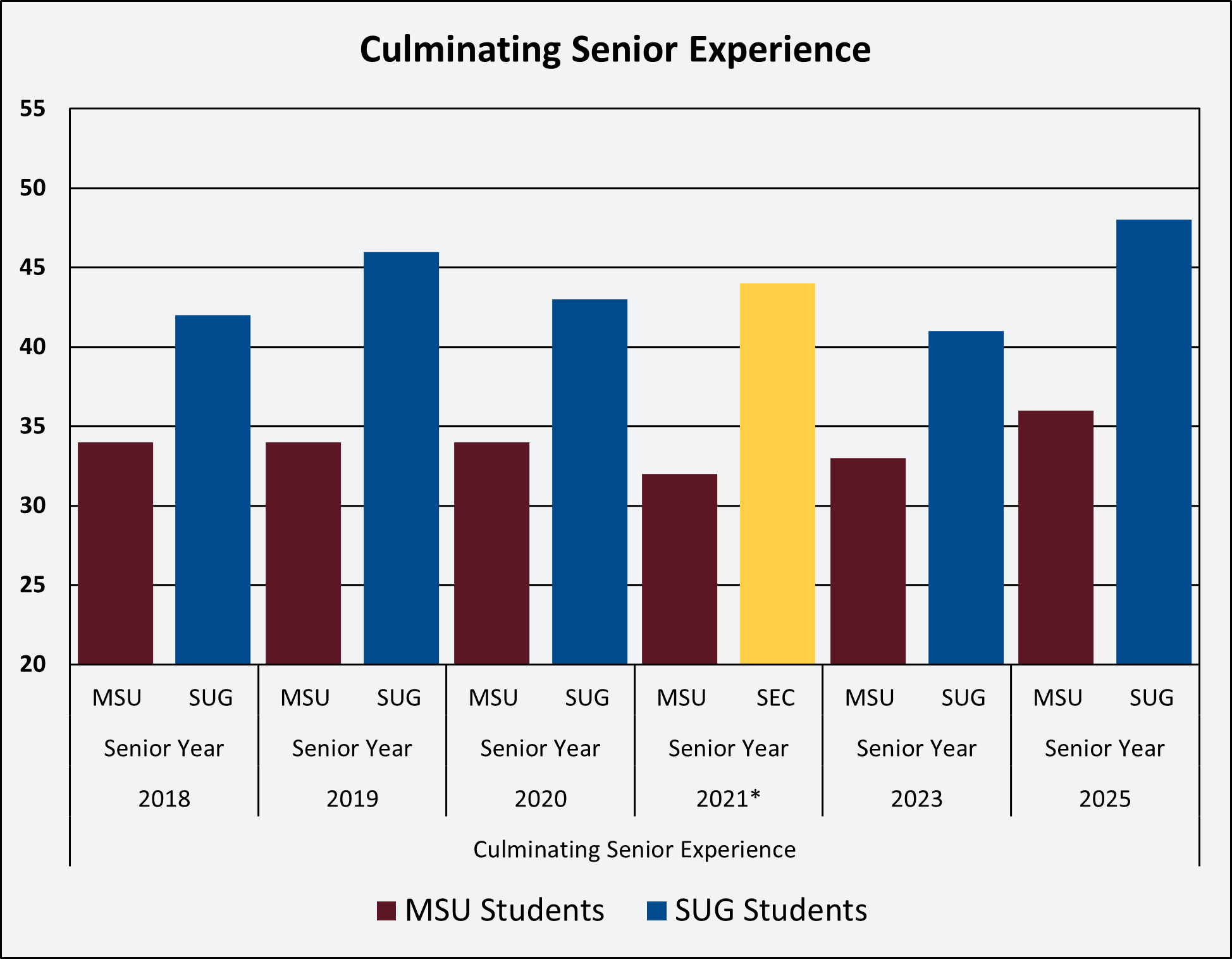

National Survey of Student Engagement

The National Survey of Student Engagement (NSSE) represents an indirect, external measure that has been standardized with hundreds of four-year colleges and universities. The NSSE measures students’ engagement with coursework and studies and how the university motivates students to participate in activities that enhance student learning. This survey is used to identify practices that institutions can adopt or reform to improve the learning environment for students.

Each year, the Office of Institutional Research and Effectiveness deploys the online survey to freshmen and seniors. The institution then compares its results to a group of peers from the same Carnegie classification, from a peer group determined by the NSSE examiners, and from a group of peers that MSU has identified.

NSSE Survey Results

Engagement Indicators

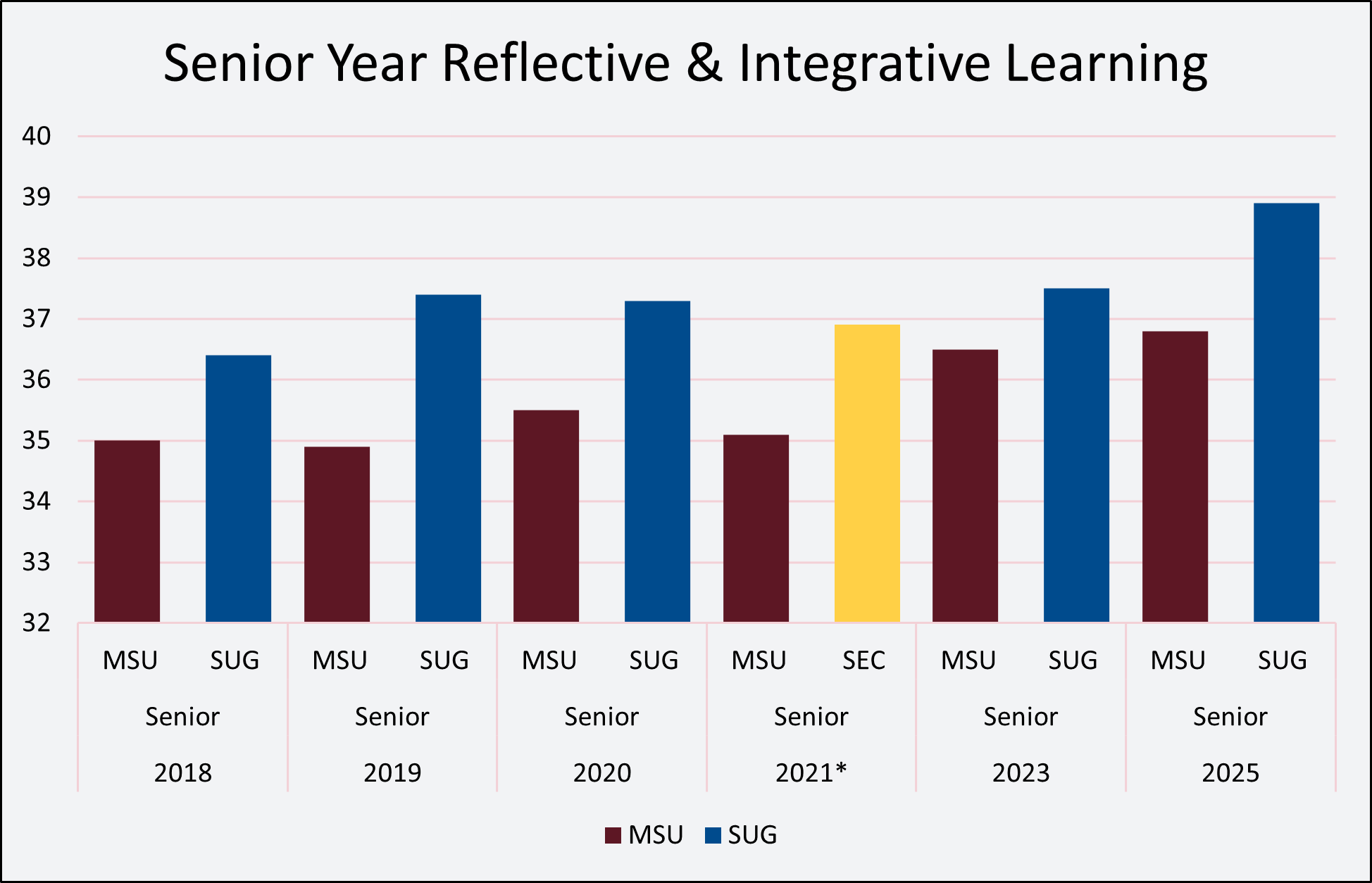

The reflective and integrative learning engagement indicator assesses how students personally connect with course materials. It seeks to show how those connections relate to student understanding and experiences. Instructors who emphasize reflective and integrative learning in their classes motivate students to make connections between their learning and the world around them. The table below shows how Mississippi State University students compare to our Southern University Group (SUG) peers.

High Impact Practices

Due to their positive associations with student learning and retention, certain undergraduate opportunities are designated "high-impact." High-Impact Practices (HIPs) demand considerable time and effort, facilitate learning outside of the classroom, require meaningful interactions with faculty and students, encourage collaboration with others, and provide frequent and substantive feedback. Participation can be life-changing (Kuh, 2008).